Scalable and Performant ASP.NET Core Web APIs: SQL Server Isolation Level

This is another post in a series of posts on creating performant and scalable web APIs using ASP.NET core 2.0. In this post we’ll have a little look at isolation levels in SQL Server which is something that is often not thought about … until we hit performance issues …

By default, SQL Server uses the Read Committed isolation level and by default this blocks reads whilst updates take place. This can lead to scalability issues if we have lots of users reading data in addition to users writing data - particularly when the writes are heavy.

Let’s look at a simple example of simulating some users updating a contact record whilst other users get contact records.

We’ll start of with getting a benchmark for users just getting contact records. Here’s our Dapper data access code to get a contact record:

public Contact GetContactById(Guid contactId){ Contact contact = null;

using (SqlConnection connection = new SqlConnection(ConnectionString)) { connection.Open();

string sql = @"SELECT ContactId, Title, FirstName, Surname, AddressType.Type AS AddressType, Address.AddressLine1, Address.AddressLine2, Address.AddressLine3, Address.Town, Address.County, Address.Country, Address.Postcode, EmailAddressType.Type AS EmailAddressType, EmailAddress.Email AS EmailAddress, PhoneNumberType.Type As PhoneNumberType, PhoneNumber.Number AS PhoneNumber, Contact.RowVersion FROM Contact LEFT JOIN Address ON Contact.ContactPK = Address.ContactPK LEFT JOIN AddressType ON Address.AddressTypePK = AddressType.AddressTypePK LEFT JOIN EmailAddress ON Contact.ContactPK = EmailAddress.ContactPK LEFT JOIN EmailAddressType ON EmailAddress.EmailAddressTypePK = EmailAddressType.EmailAddressTypePK LEFT JOIN PhoneNumber ON Contact.ContactPK = PhoneNumber.ContactPK LEFT JOIN PhoneNumberType ON PhoneNumber.PhoneNumberTypePK = PhoneNumberType.PhoneNumberTypePK WHERE Contact.ContactId = @ContactId AND Address.IsPrimary = 1 AND EmailAddress.IsPrimary = 1 AND PhoneNumber.IsPrimary = 1";

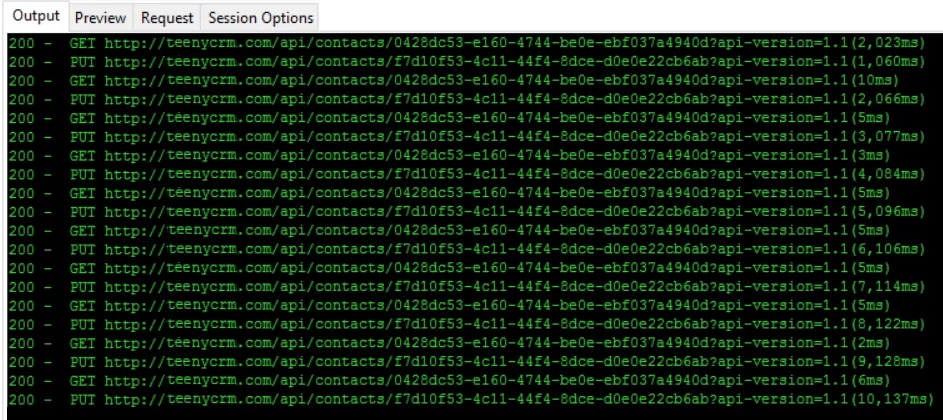

contact = connection.QueryFirstOrDefault<Contact>(sql, new { ContactId = contactId }); } return contact;}Here’s a snipet of results from our load test on the API endpoint:

On to our data access code for updating a contact record … We’ve simulated a long database write by using a Thread.Sleep resulting in the updating taking > 1 sec.

public void UpdateContactName(Contact contact){ using (IDbConnection connection = new SqlConnection(ConnectionString)) { connection.Open();

using (IDbTransaction transaction = connection.BeginTransaction()) { connection.Execute(@"UPDATE Contact SET Title = @Title, FirstName = @FirstName, Surname = @Surname FROM Contact WHERE ContactId = @ContactId", contact, transaction);

System.Threading.Thread.Sleep(1000);

transaction.Commit(); } }}Ok, lets load test some users updating a contact record whilst other users are getting the contact record:

We see that the GET requests take far longer than they were previously because they are being blocked by the PUT requests.

So, what can we do? How can we resolve this? It is tempting to use the Read Uncommitted isolation level. This appears to resolve the problem …

public void UpdateContactName(Contact contact){ using (IDbConnection connection = new SqlConnection(ConnectionString)) { connection.Open();

using (IDbTransaction transaction = connection.BeginTransaction(IsolationLevel.ReadUncommitted)) { connection.Execute(@"UPDATE Contact SET Title = @Title, FirstName = @FirstName, Surname = @Surname FROM Contact WHERE ContactId = @ContactId", contact, transaction);

System.Threading.Thread.Sleep(1000);

transaction.Commit(); } }}

… but what if the SQL did more database writes after the UPDATE on the Contact table and in the process errored and rolled back? We’d be potentially reading and returning incorrect data!

There is a much simpler solution … We can change the Read Committed isolation level behaviour to not block and instead read the current committed data. We change this on the database using the following SQL command:

ALTER DATABASE <Our database name>SET READ_COMMITTED_SNAPSHOT ON WITH ROLLBACK IMMEDIATEGOSo, no code changes - sweet!

If we now do the same load test again, the GET requests are much faster because they are not being blocked by the the PUT requests:

In summary, having READ_COMMITTED_SNAPSHOT ON can generally lead to better performance without losing any accuracy in the data.

Comments

Anderson February 15, 2018

Hey,

Nice series of posts! When I started reading I thought you were going to use the IsolationLevel.ReadUncommitted with the query, but you used it with the update. Is that right?

Maybe if your update statement was used with a select we could see some read uncommitted effect.

Carl February 16, 2018

Good question Anderson, yes, you would get the same result if you put a IsolationLevel.ReadUncommitted transaction around the GET query.

David February 18, 2018

Excellent! Brief and to the point, and best of all no actual code changes! Win-win-win!